The War Between Awareness and Memory

About a month ago Robert Scoble blogged about abandoning Twitter and Friendfeed. He said that he thought “real-time systems” like these and other micro-blogging tools were hurting long term knowledge. Turns out that he’s mostly worked up about the lack of archiving and quality of search.

On April 19th, 2009 I asked about Mountain Bikes once on Twitter. Hundreds of people answered on both Twitter and FriendFeed. On Twitter? Try to bundle up all the answers and post them here in my comments. You can’t. They are effectively gone forever. All that knowledge is inaccessible. Yes, the FriendFeed thread remains, but it only contains answers that were done on FriendFeed and in that thread. There were others, but those other answers are now gone and can’t be found.

This is not exactly the same idea as the theme in this post, because a lot of what bothers him can be solved technically. But there is evidence that faster, easier, access to current awareness broadens our absorption of the present and thins out our access to the past. Simply put, too much of now means less and less memory.

This was quite dramatically illustrated about a year ago by sociologist of science James Evans, who published a paper in the journal Science entitled “Electronic Publication and the Narrowing of Science and Scholarship”. Evans analysed citation activity across several large databases of journals (including arts and humanities) through their evolving history, because he wanted to see what would happen with how scientists and scholars responded to the increasing availability of back files going back in time, as journals were retroactively digitised. How would online access influence knowledge discovery and use? One of his hypotheses was that “online provision increases the distinct number of articles cited and decreases the citation concentration for recent articles, but hastens convergence to canonical classics in the more distant past.”

In fact, the opposite effect was observed.

As deeper backfiles became available, more recent articles were referenced; as more articles became available, fewer were cited and citations became more concentrated within fewer articles. These changes likely mean that the shift from browsing in print to searching online facilitates avoidance of older and less relevant literature. Moreover, hyperlinking through an online archive puts experts in touch with consensus about what is the most important prior work—what work is broadly discussed and referenced. ... If online researchers can more easily find prevailing opinion, they are more likely to follow it, leading to more citations referencing fewer articles. ... By enabling scientists to quickly reach and converge with prevailing opinion, electronic journals hasten scientific consensus. But haste may cost more than the subscription to an online archive: Findings and ideas that do not become consensus quickly will be forgotten quickly.

Now this thinning out of long term memory (and the side effect of instant forgettability for recent work that does not attract fast consensus) is observed here in the relatively slow moving field of scholarly research. But I think there’s already evidence (and Scoble seems to sense this) that exactly the same effects occur when people and organisations in general get too-fast and too-easy access to other people’s views and ideas. It’s a psychosocial thing. We can see this in the fascination with ecologies of attention, from Tom Davenport to Chris Ward to Seth Godin. We can also see it in the poverty of attention that enterprise 2.0 pundits give to long term organisational memory and recordkeeping, in the longer term memory lapses in organisations that I have blogged about here in the past few weeks, and in the professional unreadiness of the records management profession for the challenges of preserving memory in faster moving, more fragmented knowledge environments (Steve Bailey’s work is a rare exception).

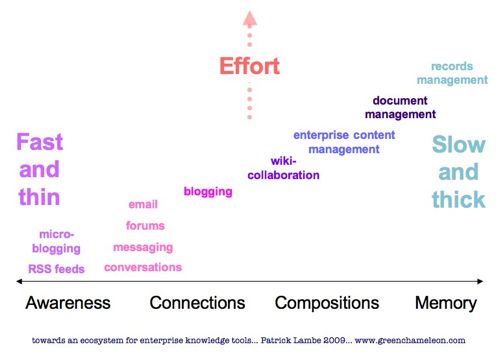

I find the concept of pace layering to be quite useful to get a handle on how different tools support different kinds of knowledge interactions in the enterprise. In particular, it helps to characterise both tools and knowledge as “faster” or “slower” moving. The diagram below tries to capture this in a simple way (it owes some ideas to a fascinating and instructive conversation with Olivier Amprimo back in April).

A pace layering view helps to sort out a spectrum of possibilities, from looking after awareness needs (which faster, more fragmented, more context-bound tools provide) through to socialisation tools (thanks Olivier) which strengthen inter-personal connections, trust-warrants for where is good to pay attention to, and knowledge flows; then we pass through collaboration (eg wikis) into more reflective solidification of knowledge assets, whether for near term use (documents) or long term memory (records).

This is a more subtle and interesting differentiation across a connected continuum than more bipolar frames allow. For example, if we look at the left of the diagram and think in terms of Wenger’s communities of practice, we can read his concept of participation, and on the right we can see his notion of reification. Or we can read Dave Snowden’s distinction between stocks and flows of knowledge. In both cases, I think the pace layering frame affords us a way of getting to grips a little more easily with how we how we manage two very different phenomena in the same environment, which a bipolar frame does not.

For example, it shows us more clearly how technology use and adoption over the past ten years has moved inexorably towards the left: awareness, speed and reduction of effort have driven technology adoption, in fact pushing us past our natural baseline (which is conversation) into more fragmented, noisy and less personal environments (which is not to say they are not personal). At the same time, effort, attention and adoption that favours slower content, longer and more stable memory support, and greater investment in the content we create, has steadily diminished. It’s hard to tell whether this technology-driven trend will continue, or whether the balance will shift back in favour of memory. It clearly hasn’t played out completely yet in the fragmented awareness space.

Certainly its advocacy has not, presumably on the assumption that old-school enterprise technology mindsets (very slow structures) are still withstanding the lures of the fast side, while real human beings are increasingly working outside those structures to satisfy their awareness, connections and collaboration needs.

The problem is that enterprise technology toolsets may be slow, but that’s the only thing they have in common with the right hand side knowledge activities in my diagram. The truth is, they are as bad at supporting reflection and memory as they are at supporting faster knowledge needs. Memory and coordination (ie avoiding big mistakes) are the abandoned babies crying in the corner, while the prophets of the new slug it out with the IT incumbents, obsessed as they are with risk and security and just keeping a handle on things.

Technology is the big, and possibly fatal distraction for knowledge management.

I’ve blogged before about the value of seeing knowledge activity and tool use in an enterprise as an ecosystem. The pace layering approach, I believe, gives greater definition to this view, and it helps to avoid the “either/or” mentality that we so easily fall into when we see two broad categories. Even though we stretched out the spectrum of possibilities along a continuum in the diagram above, the essence of pace layering is that the layers co-exist and inter-operate. Understanding this is fundamental to their effective use.

For example, if we look again at the more fragmented end of the spectrum on the left, we could characterise this knowledge as noise, or we could characterise it as interstitial knowledge – knowledge that exists and operates between the interstices and gaps of more formally constructed (slower) knowledge, and helps people connect and interpret the pieces of that knowledge. Attention flows from fast to slow and in between, aided by such interstitial tools.

This is close to Bonnie Nardi’s notion of “invisible work” which is constituted by all the non-formal connecting and adapting things we do between the formally defined and documented tasks in our jobs. Neglecting invisible work can be catastrophic in system design (because you design for work environments that don’t really exist) and in knowledge management (because you don’t see where critical performance knowledge lies). Invisible work is interstitial work.

What Twitter and other fragmented “real-time” tools provide is a way of capturing and scaling the knowledge aspects of this kind of invisible work, making it accessible and valuable beyond the immediate context in which it is performed. They are interstitial tools. That makes them maddening, ephemeral and distracting. Perhaps Scoble was wrong to expect memory from them, that’s just not what they are for. More interesting is what they enable, if we connect them to the broader suite of tools and activities across the spectrum.

So while at the level of technology adoption and use, there is evidence that a rush toward the fast and easy end of the spectrum places heavy stresses on collective memory and reflection, at the same time, interstitial knowledge can also maintain and connect the knowledge that makes up memory. Bipolarity simply doesn’t work. We have to figure out how to see and manage our tools and our activities to satisfy a balance of knowledge needs across the entire spectrum, and take a debate about technology and turn it into a dialogue about practices. We need to return balance to the force.

6 Comments so far

- Patrick Lambe

Olivier Amprimo has blogged on some of the innovative thinking he and his team have been doing on the use of social software to support sustainable online communities. His ideas on tools for exposition and socialisation clarified my thinking on the left hand side of my diagram in the post above.

http://venividiluxi.com/en/?p=110

- Patrick Lambe

Jack Vinson has an interesting commentary over at http://blog.jackvinson.com/archives/2009/07/29/what_did_you_say_at_532_on_june_10th.html

- Bill Proudfit

The chart is very useful and ends up with records management. I’ve spent 20 years ‘doing’ RM in large organizations and what I’ve learned is that for it to have any value you have to start talking, thinking, planning, scheduling, retaining, disposing in the beginning. If you wait until the end to ‘do’ RM it will always fail. Decisions need to be made up-front at the point of creation whenever possible.

- Patrick Lambe

I agree with you Bill, this is soemthing that came up more clearly in a discussion over at Greg Lambert’s blog, where he points out that “you should only highlight (store and archive) those things that you think you’ll have to recall later on”.

My point in response was that this means knowledge workers need to become more literate about the kinds of knowledge they are using, what they are fit for, and if for memory, treat them accordingly from the point of creation.

http://www.geeklawblog.com/2009/07/capturing-knowledge-if-youve.html

GC - thanks for this interesting post. This is certainly an important topic for people in the field of KM.

My initial thought is conversations that share experiential knowledge provide the ‘glue’ between fast, in-the-moment digital thoughts and slow, but reflective documentation.

All the more reason to continue to build our capability for meaningful conversation. This also makes me wonder about ways to speed up knowledge transfer conversations while retaining their reflective value...to help us from becoming unglued.

kent

Page 1 of 1 pages

Comment Guidelines: Basic XHTML is allowed (<strong>, <em>, <a>) Line breaks and paragraphs are automatically generated. URLs are automatically converted into links.

From Tony Joyce:

Patrick,

A most interesting set of observations. Effort is but one dimension of knowledge ecosystems; it varies between individual and group activities. Our knowledge tools are better at one or the other, but there is no tool that can handle both. That doesn’t stop inventive minds from searching for more flexible tools (and there is also a lot of money to be made).

But knowledge is formed not by effort alone. It is formed in process. Those processes are what adjust the balance between what we know and what we don’t know. This balancing occurs in both types of efforts, individual and group. Through this evidence we can see that there must be another dimension to be discovered. Or perhaps I should say to be uncovered in another posting to come?

regards, tony

Posted on July 30, 2009 at 11:31 AM | Comment permalink